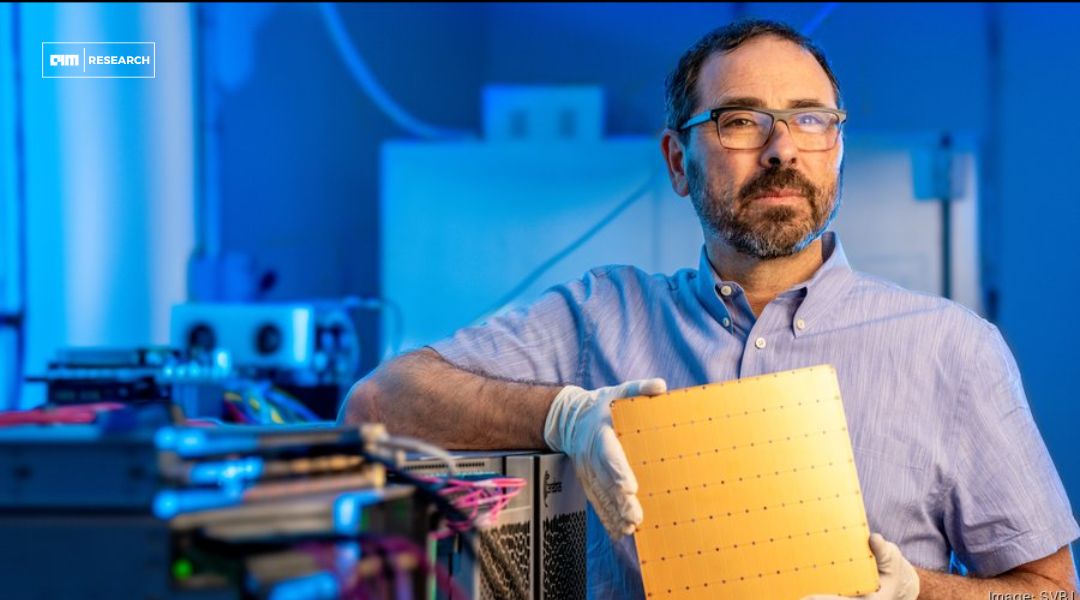

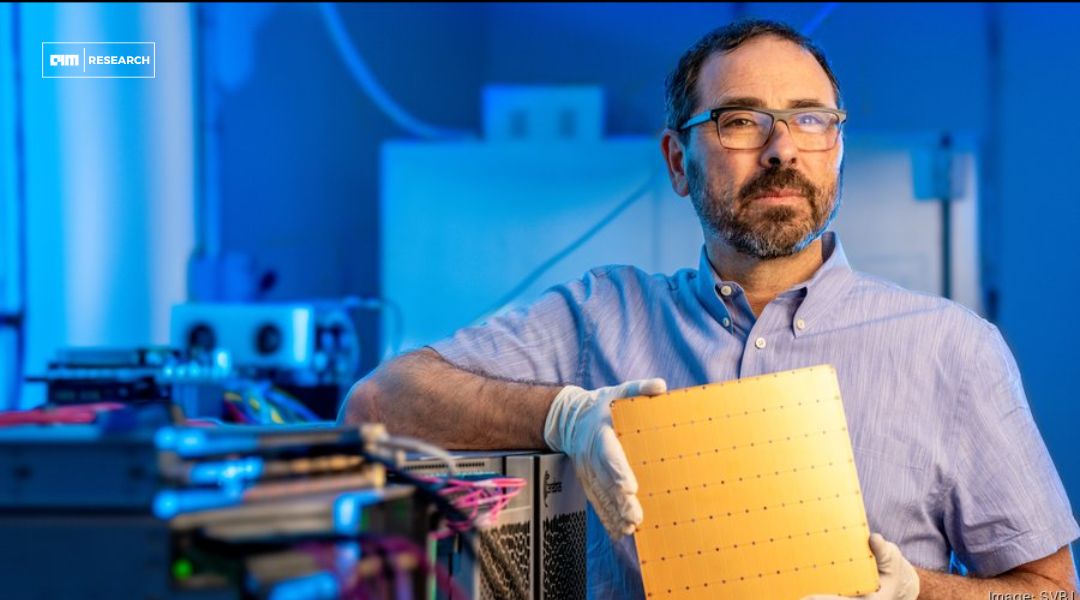

The Future of AI is Wafer Scale Says Andrew Feldman

Success in wafer-scale computing or any transformative technology demands not just vision and experience, but

Success in wafer-scale computing or any transformative technology demands not just vision and experience, but

Running Ampere independently will allow them to keep licensing its fundamental designs while having flexibility

For decades, AI computing has revolved around the same fundamental bottleneck: transferring massive amounts of

People are realizing they need more competition in AI compute.

It brings data directly to the point of compute while supporting industry-standard architectures.

Whether Trainium marks the beginning of a market shake-up or just another chapter in Nvidia’s

We’re solving problems that go to the heart of computing inefficiencies.

By running the largest models at instant speed, Cerebras enables real-time responses from the world’s

Since light defines the speed limit in our universe, it’s the fastest thing out there.

Current AI infrastructure leaves GPUs underutilized, waiting for data to flow through bottlenecked pipelines. Our